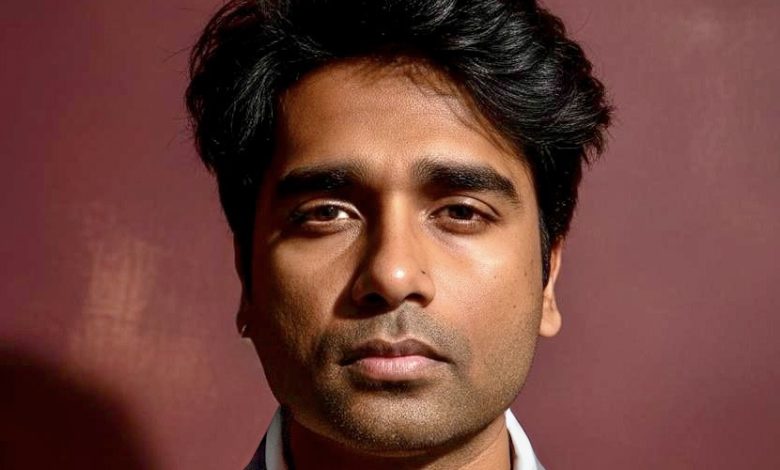

Ranjan Ebenezer is a Senior Manager of BI and Analytics with more than 16 years of experience building business intelligence and data infrastructure across enterprise technology environments. His work focuses on the foundational layer that determines whether AI deployments succeed: the data pipelines, reconciliation frameworks, and multi-ERP integrations that turn fragmented enterprise data into reliable inputs. He has spent much of his career uncovering and resolving the kind of structural data problems that AI alone cannot fix and often makes worse. In this conversation, he discusses why so many enterprise AI initiatives stall before producing value, and what foundational data work has to be in place first.

You’ve worked across Oracle, SAP, NetSuite, and Salesforce environments simultaneously. What does AI actually encounter when it’s dropped into that kind of fragmented data landscape?

In a fragmented environment spread across Oracle, SAP, NetSuite, and Salesforce, AI usually runs into siloed data, inconsistent definitions, and a lot of system noise. When those platforms are not connected through a unified architecture, the result is conflicting logic and uneven formatting that make the insights far less trustworthy. My approach is to build custom backend pipelines that clean, normalize, and connect the data first, so the AI layer is working from accurate, consistent information. That process creates a true single source of truth, turning disconnected records into something the business can actually rely on. Once that foundation is in place, AI can do much more than basic analysis. It can start identifying opportunities, closing gaps, and helping drive proactive, multimillion-dollar revenue recovery.

When an AI initiative underperforms in an enterprise setting, where do you usually find the real problem?

Enterprise AI usually falls short not because the algorithms are weak, but because the data foundation underneath them is inconsistent and disconnected from how the business actually works. In many cases, the real problem is a gap between the technical model and the quote-to-cash processes it is supposed to support. When data across systems like SAP and Oracle is fragmented, unclean, or misaligned, AI tends to produce noise instead of useful insight. Real results come from a techno-functional approach that connects the business process to the data architecture, so AI is working with reliable signals rather than just added technical complexity.

You recovered over $100M+ in revenue through data reconciliation work. How much of that problem would have gone undetected (or gotten worse) if an AI layer had been added on top of it before the underlying data was fixed?

If AI had been layered on too early, the $100M+ in revenue leakage probably would have stayed hidden or even gotten worse by speeding up flawed billing logic. When you apply AI to broken data structures, it does not fix the problem. It often amplifies it, creating an automated blind spot where bad outputs are trusted simply because they come from a sophisticated system. My focus was to fix the underlying data flow across disconnected platforms first, so any intelligence layer would be working from validated, reliable information. By addressing those structural issues both manually and through custom algorithms, I was able to prevent AI from scaling the problem and instead put it in a position to generate real business value. This experience made one thing clear: when financial stakes are high, strong data foundations have to come before algorithmic sophistication.

What has to be true about a company’s data infrastructure before an AI deployment is worth attempting?

Before AI is ready to deliver real value, a company needs a reliable single source of truth across its data ecosystem. That means bringing together fragmented systems like SAP and Salesforce into a unified structure where the data is clean, consistent, and governed by the same logic. Without that foundation, AI does not solve inefficiencies. It usually automates them and can even hide serious financial risks behind unreliable outputs. The system also has to be built with the business in mind, so the signals AI produces are relevant and useful for the teams making operational decisions. In the end, AI is only worth deploying when the data is trustworthy enough to support action without constant manual checking.

You’ve built internal tooling from scratch to support BI operations. How does that product-minded approach change how you think about AI readiness?

A product-minded approach means focusing less on building features for their own sake and more on solving real business problems with clear, measurable results. When I build internal BI tools, I treat data as a product that has to be usable, reliable, and relevant to the people making decisions. From that perspective, AI readiness is not really about how advanced the algorithm is. It is about whether the underlying data infrastructure is strong enough to earn user trust and support adoption. By designing around the end user’s workflow, I make sure AI becomes a practical tool for decision-making rather than just another isolated technical layer. That is what turns raw data into something the business can use immediately to create tangible value.

Most executives want AI results without investing in the data work that makes those results possible. How do you have that conversation?

I would like to frame it in terms executives care about most: ROI. I explain that putting AI on top of messy, unrefined data is not a shortcut to better decisions. It is a financial risk that can scale errors, hide major revenue leakage, and create false confidence in the output. I’ve seen that firsthand in situations involving more than $100 million in lost revenue, so I make the case that data integrity is not just technical cleanup. It is what protects the business and makees AI worth the investment in the first place. High-quality results only come from a strong data foundation, and in my experience, cutting corners in data architecture ends up being the most expensive way to implement AI.