Vlad Dukhnov is a product designer working at the intersection of AI, robotics, and complex real-world systems. With nearly a decade of experience across startups and large-scale platforms, he has helped companies design products that operate beyond the screen — from autonomous systems to industrial and operational interfaces.

His work focuses on a fundamental shift in design: moving from static, predictable interfaces to dynamic systems that learn, adapt, and operate in uncertain environments. Rather than designing screens, Dukhnov designs behavior — shaping how intelligent systems communicate, make decisions, and integrate into human workflows.

As AI continues to redefine how products are built and used, his approach reflects a broader transformation in the role of design itself.

What pulled you into designing for AI products?

We got into AI because it was a natural evolution of the market. It wasn’t a dramatic pivot or a sudden realization. It became obvious that this is where the industry is heading, and if you are building products, you either move with that shift or you fall behind. We understood early that we needed to be on the forefront of this change, not reacting to it later.

At the same time, AI is not just another feature or technology layer. It fundamentally changes how products behave and how users interact with them. That made it much more interesting from a design perspective. It forces you to rethink not only interfaces, but the entire concept of interaction between humans and systems.

What are you actually designing when working with AI products?

We’re no longer designing fixed flows or predefined outcomes. In traditional product design, you can map out the entire experience. You know what happens after each action. You can predict the system behavior almost completely.

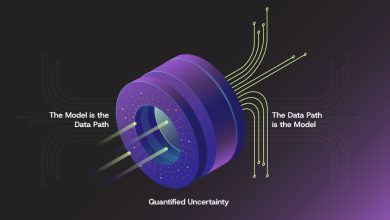

Instead, we’re designing potential solutions. These are systems that can respond to a wide range of situations for people who will use these products in real-world conditions. You are not defining a single path. You are shaping a space of possible interactions.

That changes the role of the designer. You are less focused on screens and more focused on how the system behaves under different conditions. You design frameworks rather than flows.

How has the role of the interface changed?

It’s becoming less of a traditional UI and more of a negotiation. You ask, the system responds, and theoretically you can ask for anything.

That removes the rigid boundaries that traditional interfaces had. Before, interaction was limited by what the interface allowed you to do. Buttons, menus, and flows defined the scope of possible actions. If something wasn’t designed, it simply wasn’t possible.

Now, interaction is open-ended.

The only real limit is your imagination and how well the system understands your intent. That creates a completely different dynamic between the user and the product. Instead of navigating a system, you are communicating with it.

What’s different about designing for AI in real-world environments?

The real world is unpredictable in ways that most digital designers underestimate.

A user might be on a construction site, dealing with noise, time pressure, and safety risks. Their primary way of communication might be a radio. Or they might be operating a machine through a joystick with very limited attention available for anything else.

In these environments, the interface is only one small part of a much larger system.

When you design for AI, robotics, or automation, you’re not just designing for one system. You’re designing for unpredictability on both sides. The environment is unpredictable, and the AI system itself can behave in ways that are not fully deterministic.

That means you have to anticipate conditions you cannot fully control. You need to think about failure modes, edge cases, and degraded states much more seriously than in traditional software.

How do you design for trust in AI systems?

If an AI system makes a mistake, users don’t blame themselves. They blame the system. That makes trust one of the most critical aspects of the product.

We focus heavily on transparency. The goal is to show what the AI is doing without overwhelming the user. That includes what data it is using, what it analyzed, and how it arrived at a particular outcome.

At the same time, not every user needs the same level of detail. Some users just want the result. Others want to understand the reasoning.

So the key is layering. You provide a simple answer by default, but always allow the user to go deeper. The system should be able to answer the question “why” at any point.

That ability to inspect the reasoning is what builds trust over time.

How do you think about speed vs. understanding?

There’s no point in speed if the user doesn’t understand what’s happening. A fast system that feels like a black box is difficult to trust and even harder to use effectively.

At the same time, you cannot sacrifice speed completely. If the system becomes too slow, users lose engagement and the value of automation disappears.

So it becomes a balance between speed and comprehension. The system needs to feel responsive, but also legible.

One approach is progressive disclosure. You can provide quick initial feedback and then refine or expand the result over time. That allows users to stay engaged while still understanding what the system is doing.

Does the role of the designer change when working with AI?

Yes, significantly.

You’re no longer just designing interfaces. You’re defining rules and shaping potential outcomes. Instead of fixed screens, you are designing how a system behaves in different situations.

This brings the role closer to something like a system designer or even a policymaker. You are making decisions about how the system should act, what it should prioritize, and how it should respond under uncertainty.

You are not just designing what the user sees. You are designing how the system thinks and communicates.

What do most teams get wrong about AI products?

Many teams fall into the same pattern. It’s always text, always chat, essentially another ChatGPT wrapper.

That approach is limiting. Text is only one way of interacting with a system, and it is not always the most efficient or natural one.

You can already see companies like OpenAI and Anthropic exploring ways to move beyond pure text interfaces. This is just the beginning.

We need more visual interfaces, more spatial interactions, and new ways to communicate with as little text as possible. The goal should not be to replicate conversation. It should be to find the most effective way to express intent and receive feedback.

Where do you see AI interfaces going next?

I don’t think the future of AI interfaces is the screen.

Screens are starting to show their age. They were designed for a different era of interaction, where everything had to be explicitly controlled and displayed.

The next step could involve neural interfaces or other forms of interaction that we haven’t fully discovered yet. It could also mean that interaction becomes more ambient.

Instead of actively using a system, it exists alongside you. It understands context, anticipates needs, and appears when necessary.

In that scenario, the interface is no longer something you open. It becomes part of your environment.

In one sentence, what is designing for AI?

It’s about finding a new language between humans and machines.